Hey there, folks! Pull up a chair (or stand at your fancy adjustable desk – no judgment here). We need to have a heart-to-heart about the information cesspool we’re all wading through daily. You know what I’m talking about – that endless stream of “facts” flooding your feeds, some legit, many… not so much.

Does this sound familiar? You’re in a meeting, and someone confidently states, “I read that AI will replace all developers by 2025!” Your eye starts twitching, and you can feel your blood pressure rising. You know it’s not true, but how do you even begin to unpack all the layers of wrong in that statement?

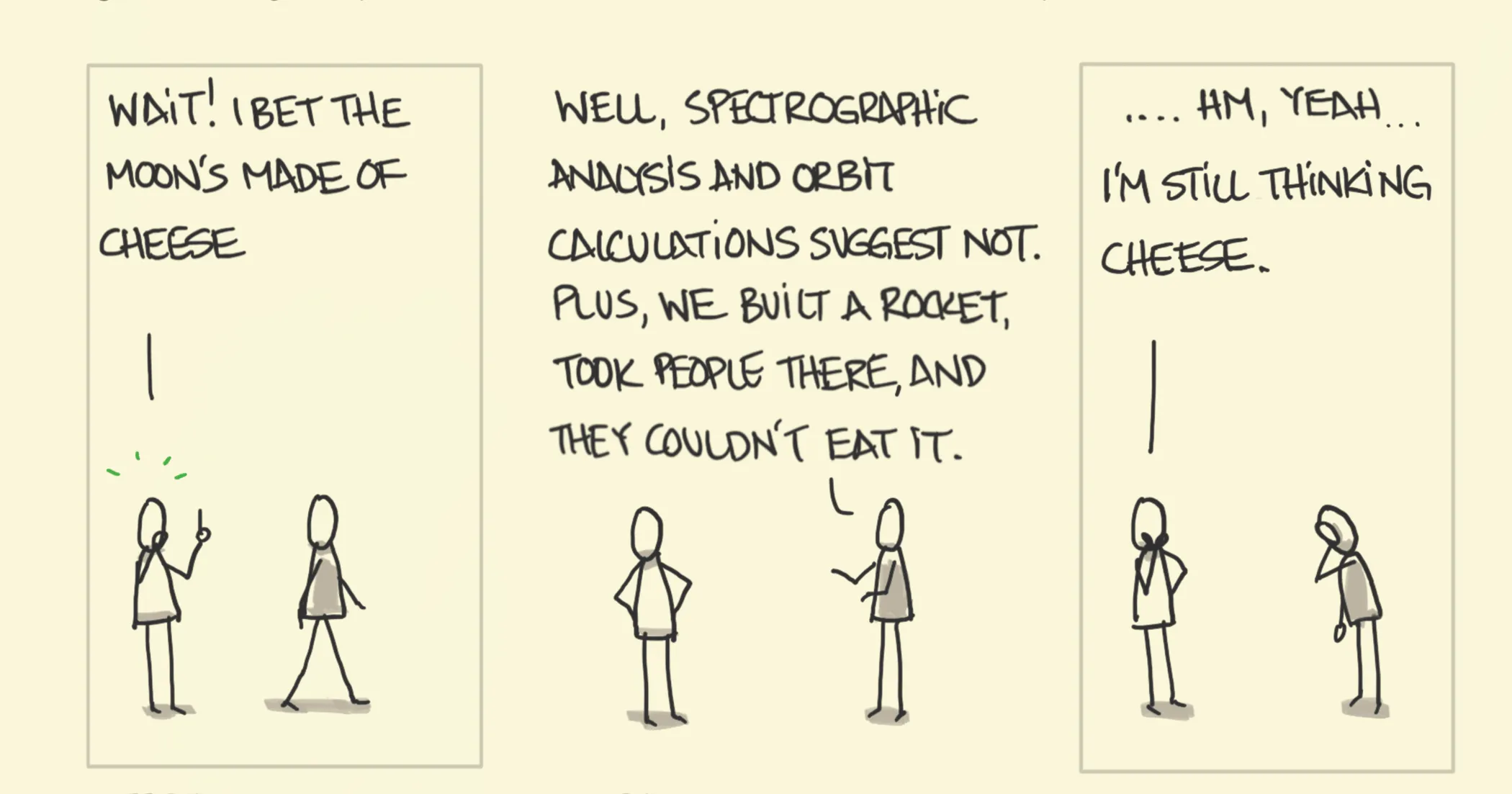

Welcome to the world of Brandolini’s Law, also known as the Bullshit Asymmetry Principle. Italian programmer Alberto Brandolini coined this term back in 2013, and it goes like this:

The amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it.

Brandolini had his ‘aha’ moment while reading Kahneman’s “Thinking Fast and Slow” and watching a political interview. Sound familiar? How many of us have had similar epiphanies while doom-scrolling through Twitter or sitting through a buzzword-laden presentation?

As someone who’s been in the tech trenches for years, I’ve seen this principle in action more times than I care to count. I’ve made my share of mistakes, too – like the time I spent hours crafting a detailed rebuttal to a coworker’s flawed architectural proposal, only to realize I could have saved time by asking a few pointed questions instead.

So, why should we care about Brandolini’s Law? Well, in an industry that moves at the speed of light, where decisions can make or break products and careers, understanding how misinformation spreads (and how to combat it) isn’t just nice-to-have – it’s mission-critical.

Let’s dive in and explore why BS spreads like wildfire, and more importantly, what we can do about it. Trust me, by the end of this post, you’ll be better equipped to navigate the BS maze we call the modern information landscape.

Brandolini’s Law: The Hidden Bug in Our Information Processing

The Core of the Issue

At its heart, Brandolini’s Law is about asymmetry. Think of it like technical debt, but for information. It’s quick and easy to accumulate, but cleaning it up? That’s a whole other sprint. Creating misinformation is as simple as making an assertion. Debunking it? That’s where things get tricky. You need to:

- Research the facts

- Understand the context

- Craft a clear, logical argument

- Present your case effectively

- Deal with potential pushback

It’s like comparing the effort of introducing a bug versus fixing one. We all know which is easier, right?

Real-world Examples in Tech

Let’s look at some scenarios where Brandolini’s Law plays out in our world:

- Framework Fatigue: Remember when someone declared “jQuery is dead” around 2015? That simple statement took months of benchmark tests, migration case studies, and heated GitHub discussions to address.

- Security FUD: How about the time someone in marketing said, “Blockchain makes everything unhackable!” Cue the collective groan from every security expert within earshot.

- AI Hype: We’ve all heard wild claims about AI capabilities. Debunking these often requires explaining complex concepts like machine learning limitations, bias in training data, and the nuances of natural language processing.

The Viral Nature of Tech BS

So why does misinformation spread faster than the latest JavaScript framework? A few reasons:

- Complexity vs. Simplicity: Our work is complex. Simplified (often incorrect) explanations are easier to grasp and share.

- Dunning-Kruger Effect: In tech, a little knowledge can be dangerous. Those with limited understanding often speak the loudest.

- Hype Cycles: Our industry thrives on the “next big thing.” This creates fertile ground for exaggerated claims and unrealistic expectations.

- Echo Chambers: Thanks to algorithmic feeds and specialized communities, it’s easy for misinformation to be amplified within like-minded groups.

Here’s a question to ponder: How many times have you seen a flashy tech headline and shared it before diving into the details? I’ll admit, I’ve been guilty of this. It’s a humbling reminder that we’re all susceptible to the allure of exciting claims.

The key is to develop a healthy skepticism without becoming cynical. It’s a balancing act, for sure, but an essential skill in our field. In the next section, we’ll explore some strategies to help us navigate this tricky terrain.

Remember, recognizing the problem is the first step. Now, let’s talk solutions. How can we, as tech leaders, combat this tide of misinformation without burning ourselves out? Stick around – it’s about to get interesting.

The Psychology Behind the BS: Why Our Brains Love Bad Info

Alright, let’s put on our amateur psychologist hats for a moment. Understanding why our brains are so susceptible to misinformation is crucial if we want to combat it effectively.

Confirmation Bias: The Echo Chamber in Our Heads

We’ve all been there. You have a hunch about a particular technology or approach, and suddenly, every article you read seems to confirm your belief. That’s confirmation bias in action, folks.

Here’s a personal example: Early in my career, I was convinced that PHP was the be-all and end-all of web development. I eagerly consumed every article praising PHP and dismissed criticisms as uninformed. It took a challenging project and a patient mentor to help me see the bigger picture.

The lesson? Actively seek out information that challenges your views. It’s uncomfortable, but it’s the only way to grow.

The Dunning-Kruger Effect: When a Little Knowledge is Dangerous

Have you ever sat in a meeting where the least knowledgeable person was the most confident? Welcome to the Dunning-Kruger effect.

In tech, this often manifests as the “I watched a YouTube video, so I’m an expert” syndrome. It’s why you get junior devs confidently declaring they can rewrite the entire codebase in a weekend, or why that one sales guy insists that adding blockchain will solve all your problems.

The antidote? Cultivate humility. Remember, the more you learn, the more you realize how much you don’t know.

Cognitive Dissonance: Why Changing Minds is Hard

Ever tried to convince someone that their favorite programming language isn’t the best choice for a particular project? It’s like trying to teach a cat to bark.

This resistance to information that contradicts our beliefs is cognitive dissonance. Our brains literally experience discomfort when faced with conflicting ideas.

I remember the resistance I felt when I first encountered arguments against using object-relational mapping (ORM) tools. I had invested so much time learning and using ORMs that the idea they might not be the best solution in all cases was genuinely uncomfortable.

The takeaway? Be patient when trying to change minds – including your own. It’s a process, not an event.

The Availability Heuristic: Why Recent and Dramatic Events Skew Our Perception

Quick, what’s more likely to take down your system: a sophisticated hacker attack or a misconfigured server? If you thought “hacker attack,” you’re falling prey to the availability heuristic.

Our brains tend to overestimate the likelihood of events that are easy to recall – usually because they’re recent, dramatic, or emotionally charged. It’s why after a high-profile security breach, everyone suddenly becomes obsessed with security, sometimes at the expense of other crucial aspects of development.

To counter this, we need to train ourselves to look at the data, not just our immediate recollections. When making decisions, ask yourself: “Am I basing this on solid evidence, or just on what comes easily to mind?”

The Bandwagon Effect: Why We Jump on Tech Trends

Remember when everyone was adding “[AI]” to their product names, regardless of whether they were using AI or not? That’s the bandwagon effect in action.

It’s natural to want to follow trends – nobody wants to be left behind in the fast-moving tech world. But this tendency can lead us to adopt technologies or practices without properly evaluating their merit.

I’ll admit, I once pushed for adopting a trendy new JavaScript framework for a project, mainly because “everyone was using it.” It ended up being overkill for our needs and actually slowed us down.

The lesson? Always ask “why” before jumping on a new trend. Will it genuinely add value, or are you just afraid of missing out?

Understanding these psychological quirks doesn’t make us immune to them, but it does give us a fighting chance. In the next section, we’ll look at practical strategies for combating misinformation in tech, armed with this knowledge of why our brains are so easily led astray.

Remember, the goal isn’t to become paranoid or cynical. It’s to develop a healthy skepticism that allows us to navigate the complex world of tech with clarity and wisdom. After all, isn’t that what being a tech leader is all about?

The Challenge of Debunking Misinformation: Why It’s Like Debugging a Legacy Codebase

Alright, we’ve talked about why BS spreads and why our brains love it. Now, let’s get into the nitty-gritty of why debunking this stuff is harder than getting two APIs to play nice.

Time and Effort: The Resource Allocation Problem

Imagine you’re managing a project. Suddenly, a team member comes to you with a “brilliant” idea they read about on an obscure tech blog. It sounds fishy, but they’re excited. What do you do?

- Option A: Spend hours researching, testing, and crafting a detailed explanation of why it won’t work.

- Option B: Say “interesting, but let’s stick to the plan” and move on.

If you’re under deadline pressure (and when aren’t we?), Option B is tempting. But here’s the rub – if you don’t address it, that idea might fester and resurface later, causing even more headaches.

I once had a junior developer convinced that we should rewrite our entire backend in Brainfuck for “improved security through obscurity.” It took an entire afternoon to walk through why this was, to put it mildly, not a good idea. Time well spent? Absolutely. But it’s easy to see why many leaders might avoid these conversations.

The takeaway? Addressing misinformation is an investment. It costs time upfront but saves a ton of trouble down the line.

Emotional Investment: Why People Cling to Bad Ideas

Ever tried to tell someone their baby is ugly? That’s what it feels like to debunk an idea someone’s emotionally invested in.

In tech, we often tie our identities to our skills and knowledge. Telling a Ruby developer that Python might be better for a particular project isn’t just challenging their technical decision – it’s challenging their identity as a Ruby expert.

I remember the resistance I felt when someone suggested moving away from a tech stack I had championed. It felt like a personal attack, even though it was just a pragmatic decision.

The lesson? When debunking, focus on the idea, not the person. Create an environment where changing one’s mind is seen as a strength, not a weakness.

The Backfire Effect: When Debunking Backfires

Here’s a fun quirk of human psychology: sometimes, when presented with facts that contradict their beliefs, people double down on those beliefs. Welcome to the backfire effect.

I once spent hours putting together a presentation debunking common myths about AI. The result? Some people came out even more convinced of those myths. Facepalm moment, for sure.

So, what’s a tech leader to do? Instead of coming in guns blazing with facts and figures, try the Socratic method. Ask questions that lead people to question their own assumptions. It’s slower, but often more effective.

The Moving Goalpost: Whack-a-Mole with Ideas

Ever dealt with scope creep in a project? Debunking misinformation is like that, but worse. As soon as you address one aspect, another pops up.

You might debunk the idea that “AI will take all our jobs by next year,” only to have the goalposts move to “Okay, but it will definitely happen in five years!”

The strategy here? Focus on underlying principles rather than specific claims. Teach people how to think critically about tech predictions rather than debunking each one individually.

The Echo Chamber Effect: Shouting into the Void

In our hyper-connected world, it’s easier than ever for people to find communities that reinforce their beliefs, no matter how misguided. Try posting about the benefits of monolithic architecture in a microservices fan group and see what happens.

Breaking through these echo chambers is tough. It requires patience, empathy, and a willingness to engage with people where they are, not where you think they should be.

So, what’s the solution to all these challenges? Well, there’s no silver bullet (and if anyone tries to sell you one, refer back to Brandolini’s Law). But in the next section, we’ll explore some strategies that can help us navigate these treacherous waters.

Remember, as tech leaders, our job isn’t just to know the tech – it’s to guide our teams and organizations through the maze of information and misinformation out there. It’s not easy, but hey, if we wanted easy, we’d have become… well, I can’t think of an easy job right now, but you get the point.

Stay tuned for our next section, where we’ll dive into practical strategies for fighting the good fight against BS in tech. Trust me, it’ll be more fun than a Friday afternoon deploy. Well, maybe.

Strategies to Counter BS Effectively: Your Tech Leader’s Toolkit

Alright, fellow truth-seekers, it’s time to arm ourselves with some practical tools for fighting the good fight against misinformation. Think of this as your Swiss Army knife for cutting through BS.

Apply the Scientific Method: Not Just for Lab Coats

Remember that science class you dozed through in high school? Turns out, it’s pretty useful in the tech world too. The scientific method isn’t just for people in white coats — it’s a powerful tool for any tech leader.

Here’s how to apply it:

- Observation: “Hm, our new microservices architecture seems to be causing more problems than it solves.”

- Question: “Is a microservices architecture really the best fit for our current needs and capabilities?”

- Hypothesis: “A well-structured monolith might be more suitable for our team size and project complexity.”

- Experiment: “Let’s prototype a monolithic version of one of our services and compare performance.”

- Analyze results: “Look at that! The monolith is performing better and our team is shipping features faster.”

- Conclusion: “For our current needs, a monolith is the way to go. We’ll revisit microservices when we scale further.”

I’ve used this approach to challenge my own assumptions about “best practices.” It’s amazing how often the data surprises you when you actually test your beliefs.

Remain Skeptical: Channel Your Inner Myth Buster

In the words of the great philosopher Shrek, “Ogres are like onions.” Well, so is information in the tech world — it has layers. Don’t just peel off the first one and call it a day.

I like to use the CRAAP test (yes, that’s really what it’s called):

- Currency: Is this information up-to-date?

- Relevance: Does this apply to our specific situation?

- Authority: Is the source credible?

- Accuracy: Can we verify this independently?

- Purpose: Why is this information being shared?

I once avoided a costly mistake by applying this test to a “revolutionary” new database technology. Turns out, it was neither revolutionary nor particularly new. Just well-marketed.

Assess Impact vs. Effort: Pick Your Battles

Not all hills are worth dying on. Sometimes, letting a small misconception slide is better than burning yourself out fighting every little skirmish.

Try this:

- High Impact, Low Effort: These are your low-hanging fruit. Go for it!

- High Impact, High Effort: Worth it for big issues. Put in the work.

- Low Impact, Low Effort: Why not? If it’s easy, do it.

- Low Impact, High Effort: Avoid these time-sinks like the plague.

I use a simple 2×2 matrix to visualize this. It’s saved me from many a futile debate about tabs vs. spaces. (But seriously, spaces are better. Fight me.)

Push Back the Burden of Proof: You Made the Claim, You Back It Up

When someone comes to you with a wild claim about the latest tech trend, resist the urge to immediately start debunking. Instead, channel your inner toddler and ask, “Why?”

“Blockchain will solve all our problems!” “Interesting. Can you explain how exactly it would address our specific challenges?”

This approach does two things:

- It forces the person to think critically about their own claim.

- It saves you from doing all the heavy lifting in the debate.

Use Occam’s Razor: The Simplest Explanation is Usually Right

In tech, we love complexity. But often, the simplest explanation is the correct one.

Is your app slow because you need to rewrite it in Rust, or because you have an N+1 query problem? Nine times out of ten, it’s the latter.

Occam’s Razor helps cut through elaborate theories and focus on the most likely causes. It’s saved me countless hours of chasing wild geese. Or are they red herrings? Whatever, you get the point.

Employ Hitchen’s Razor: What Can Be Asserted Without Evidence Can Be Dismissed Without Evidence

This is your get-out-of-jail-free card for dealing with baseless claims. If someone can’t provide any evidence for their assertion, you’re under no obligation to provide evidence against it.

“We need to rewrite everything in WebAssembly!” “Do you have any benchmarks or case studies showing it would improve our specific application?” “Well, no, but everyone’s talking about it!” “Let’s table that idea until we have some concrete data to support it.”

Boom. Hitchen’s Razor in action.

Leverage Permission Structures: Make It Easy for People to Change Their Minds

Changing your mind can feel like admitting defeat. As leaders, we need to create an environment where it’s okay — even encouraged — to update your views based on new information.

Try phrases like:

- “I used to think X, but then I learned Y.”

- “That’s an interesting perspective. It’s making me reconsider my stance on Z.”

- “I was wrong about that. Thanks for helping me see it differently.”

By modeling this behavior, you give your team permission to do the same.

Remember, the goal isn’t to “win” every argument. It’s to collectively move towards better understanding and decision-making. Every so often that means admitting you were wrong. And that’s okay. In fact, it’s more than okay — it’s how we grow.

In our final section, we’ll talk about how to foster a culture of critical thinking in your team. Because fighting BS isn’t a one-person job — it’s a team sport. And I promise, it’s more fun than your company’s mandatory team-building exercises. Well, most of them anyway.

Fostering a Culture of Critical Thinking: Making BS Detection a Team Sport

Alright, we’re in the home stretch. You’ve got your BS-busting toolkit, but let’s face it — you can’t fight this battle alone. It’s time to level up your entire team.

Lead by Example: Be the Change You Want to See in the Codebase

As leaders, we set the tone. If we want our teams to think critically, we need to walk the walk. Here’s how:

- Admit when you’re wrong. Loudly and proudly. I once pushed for a trendy new framework that ended up being a square peg in a round hole. Instead of doubling down, I called a team meeting and said, “Folks, I screwed up. Here’s what I learned.” The result? Team members felt safer admitting their own mistakes.

- Ask questions. Lots of them. When someone proposes an idea, resist the urge to immediately agree or disagree. Instead, ask probing questions. “How would this scale?” “What are the potential downsides?” “Are there any case studies we can look at?”

- Embrace uncertainty. Get comfortable saying “I don’t know, but let’s find out.” It shows that it’s okay not to have all the answers, and models how to approach uncertainty.

Create a ‘Red Team’ Culture: Professional Devil’s Advocates

In cybersecurity, ‘red teams’ try to break into systems to test their defenses. Apply this concept to your decision-making process:

- Assign a ‘red team’ for important decisions. Their job? To argue against the proposed solution, regardless of their personal opinions.

- Rotate this role. Everyone should get a chance to flex their critical thinking muscles.

- Reward good questions, not just good answers. “Great question!” should be music to your team’s ears.

I’ve seen this approach prevent numerous costly mistakes. Plus, it’s a great way to develop your team’s critical thinking skills.

Implement ‘Assumption Audits’: Spring Cleaning for Your Brain

Regular code reviews? Check. Regular assumption reviews? It’s time to make it a thing.

- Schedule quarterly ‘assumption audits’. Get the team together and list out all the assumptions you’re making about your product, your users, your tech stack, etc.

- Challenge each assumption. “Do we know this for sure?” “What evidence do we have?” “What if this isn’t true?”

- Prioritize assumptions to test. You can’t test everything, so focus on the assumptions that, if wrong, would have the biggest impact.

This practice has led to some of our biggest breakthroughs. Turns out, many of our ‘truths’ were just comfortable myths.

Encourage Diverse Perspectives: Your Echo Chamber Escape Hatch

Echo chambers are BS breeding grounds. Break them up by:

- Hiring for cognitive diversity. Different backgrounds lead to different perspectives. And different perspectives lead to better BS detection.

- Rotating team members between projects. Fresh eyes can spot assumptions long-timers have stopped seeing.

- Bringing in outside experts. Sometimes you need an outsider’s perspective to shake things up.

Make Learning a Core Value: Stay Hungry, Stay Foolish

In tech, standing still is moving backwards. Foster a culture of continuous learning:

- Set up a learning budget. Encourage team members to attend conferences, take courses, or buy books.

- Start a team book club. Pick a relevant book each month and discuss its implications for your work.

- Implement ‘TIL’ (Today I Learned) sharing. Start team meetings with everyone sharing something new they’ve learned.

Remember, the goal isn’t to create a team of cynics. It’s to build a group of curious, critical thinkers who can navigate the complex world of tech with confidence.

Conclusion: The Never-Ending Battle

Fighting BS in tech is like playing whack-a-mole while riding a unicycle on a tightrope. Over a pit of buzzword-spewing alligators. It’s challenging, it’s constant, but it’s also vitally important.

By understanding Brandolini’s Law, recognizing our own biases, and implementing strategies to combat misinformation, we can create better products, make smarter decisions, and maybe, just maybe, make the tech world a little bit saner.

Remember, every time you question an assumption, challenge a baseless claim, or admit to a mistake, you’re making our industry a little bit better. It’s not easy, but then again, if we wanted easy, we wouldn’t be in tech, would we?

So go forth, fellow BS-busters. Ask questions. Challenge assumptions. Admit when you’re wrong. And for the love of all that is holy, please stop trying to blockchain all the things.

Now, if you’ll excuse me, I need to go fact-check this article. Can’t be too careful, you know?