The Cone of Uncertainty, Fibonacci scale, and Cynefin walk into a sprint planning session…

A product manager walked into a refinement session a few years back, dropped a one-pager on the table, and said: “How long will this take?” The team I was leading had been through enough of these moments to know the ritual. Someone would say a number. That number would get written down. Two weeks later, it would become a deadline.

I stopped the session. I told her we couldn’t answer that question yet - not because we were hiding something, but because the honest answer was “somewhere between three weeks and five months, and I’m not joking.” What followed was an uncomfortable thirty minutes that probably saved us three months of political fallout. We talked about what we actually knew versus what we were assuming. We agreed on what had to be true before the estimate could meaningfully narrow. We walked out with a plan to answer the question - not an answer.

That’s the conversation most engineering teams never have. And it’s the conversation the #NoEstimates movement, for all its righteous frustration, accidentally makes harder to have.

The Problem #NoEstimates Actually Solved

I want to be fair here. The people who built the #NoEstimates movement weren’t being irresponsible. They were reacting to something real and genuinely damaging: the industrial-scale misuse of estimates as performance targets.

The pattern they were fighting goes like this. A team, knowing very little about a problem, is asked for an estimate. They give one - probably optimistic, because there’s social pressure to be bold. That number gets written into a Confluence document, escalated to a VP, packaged into a roadmap, and committed to a customer. Six months later, when reality diverges from the estimate (as it always does), the team gets blamed for “missing” a deadline they never agreed to. The estimate was not a forecast. It was, from the moment it left their mouths, a commitment - they just didn’t know it yet.

That pathology is real. I’ve lived it. The frustration is valid.

But the solution - don’t estimate - is like responding to a broken thermometer by deciding temperature doesn’t exist. The thermometer was the problem. Not the concept of heat.

What often gets lost in that frustration: your estimate doesn’t change the actual work. Maarten Dalmijn puts it well in his writing on estimation - reality is unaffected by your guess (really great article btw., you should read it). The jar contains whatever number of pennies it contains, regardless of what you said. Delivering a feature takes as long as it takes. What the estimate influences is not the work itself but the expectations around it - and managing those expectations, honestly and clearly, is very much worth doing (and it’s part of the job).

Why Organizations Actually Need Estimates

Here is the thing that the #NoEstimates literature almost always sidesteps: estimates are not primarily for the team doing the work. They are for the organization around the team.

Three specific situations make estimation non-negotiable for anyone operating inside a larger organization.

External commitments. When your product is part of a customer contract, when a launch is tied to a marketing campaign, when a regulatory deadline exists that your company cannot move: someone has to make a call about what’s feasible. That call requires some model of how long things take. “We don’t estimate” is not an answer a business can give a customer. It’s a sentence that ends contracts.

Inter-team dependencies. As soon as you have more than one team, you have coordination problems. Team B cannot start their work until Team A finishes theirs. If Team A refuses to give any forecast of when they’ll be done, Team B cannot plan. Not because planning is sacred, but because people - real people - need to make decisions about where to point their attention next. A rough signal (“probably six weeks, could be eight”) is infinitely more useful than silence. This is not about control. It’s about respect for the humans downstream of you.

ROI calculations. Every product decision is implicitly a resource allocation decision. Should we build Feature X or Feature Y? To answer that, you need some model of relative cost. If everything is unknowable, you cannot make reasoned trade-offs - you’re just guessing. And if you’re going to guess anyway, you might as well make it a structured guess with explicit assumptions attached.

None of these needs require precise estimates. They require useful ones. That distinction is everything.

What Joseph Pelrine Showed Us About Why This Is Hard

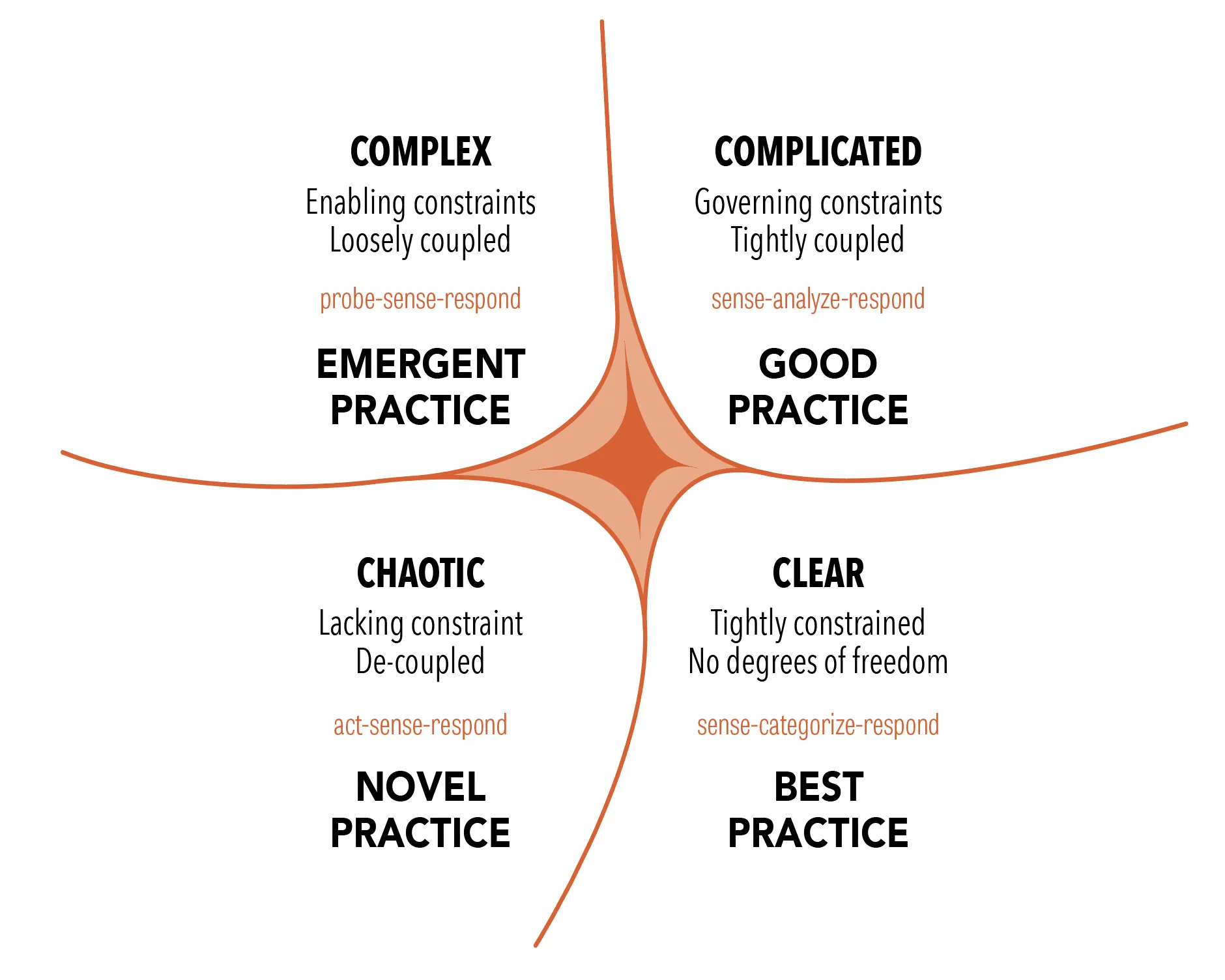

Joseph Pelrine is Europe’s first Certified Scrum Trainer (and someone with really-really down-to-earth, no-BS talks!) and spent years researching software agility through the lens of social complexity science. In this talk, he describes a fascinating exercise he ran with over 300 people working in Agile software development. Using the Cynefin framework - Dave Snowden’s sense-making model that classifies problems into domains: Clear, Complicated, Complex, and Chaotic - he asked participants to place their everyday work activities into the appropriate domain.

The Cynefin framework

Task estimation, consistently and repeatedly, landed in the Complex domain.

Not Complicated. Complex.

This distinction matters enormously. In the Complicated domain, cause and effect are knowable - you might need an expert to trace the relationship, but it’s traceable. A bridge engineer can calculate load tolerances. A surgeon can estimate recovery time based on procedure type and patient profile. Expertise produces reliable forecasts.

In the Complex domain, cause and effect are only knowable in retrospect. The system is too entangled, too context-dependent, too sensitive to small changes. You don’t analyze and then act. You probe, observe what happens, and respond. Your knowledge is inherently local and temporary.

Task estimation sits here because software development is not manufacturing. We are, almost always, building something that has never existed before, on top of a codebase that is unique, with a team whose dynamics are unique, solving a problem that is slightly different from every previous problem. The carpenter analogy doesn’t work: cabinets are roughly like other cabinets. Features are not roughly like other features.

What Pelrine’s work tells us is not “don’t estimate.” It tells us: you are applying an ordered-domain tool to an unordered-domain problem. The answer is not to throw away the tool. It’s to use it with the appropriate epistemic humility - and to stop pretending the output is a commitment.

This also reframes an honest truth that most estimation advice dances around. Even great teams only produce mediocre estimates. Not because they’re not skilled, but because there’s a hard ceiling on how accurate you can be in a Complex domain. The goal is not to get estimates right. The goal is to make useful decisions despite their inherent inaccuracy. Confusing those two goals is where most estimation dysfunction begins.

The Cone Doesn’t Lie, But You’re Ignoring What It’s Saying

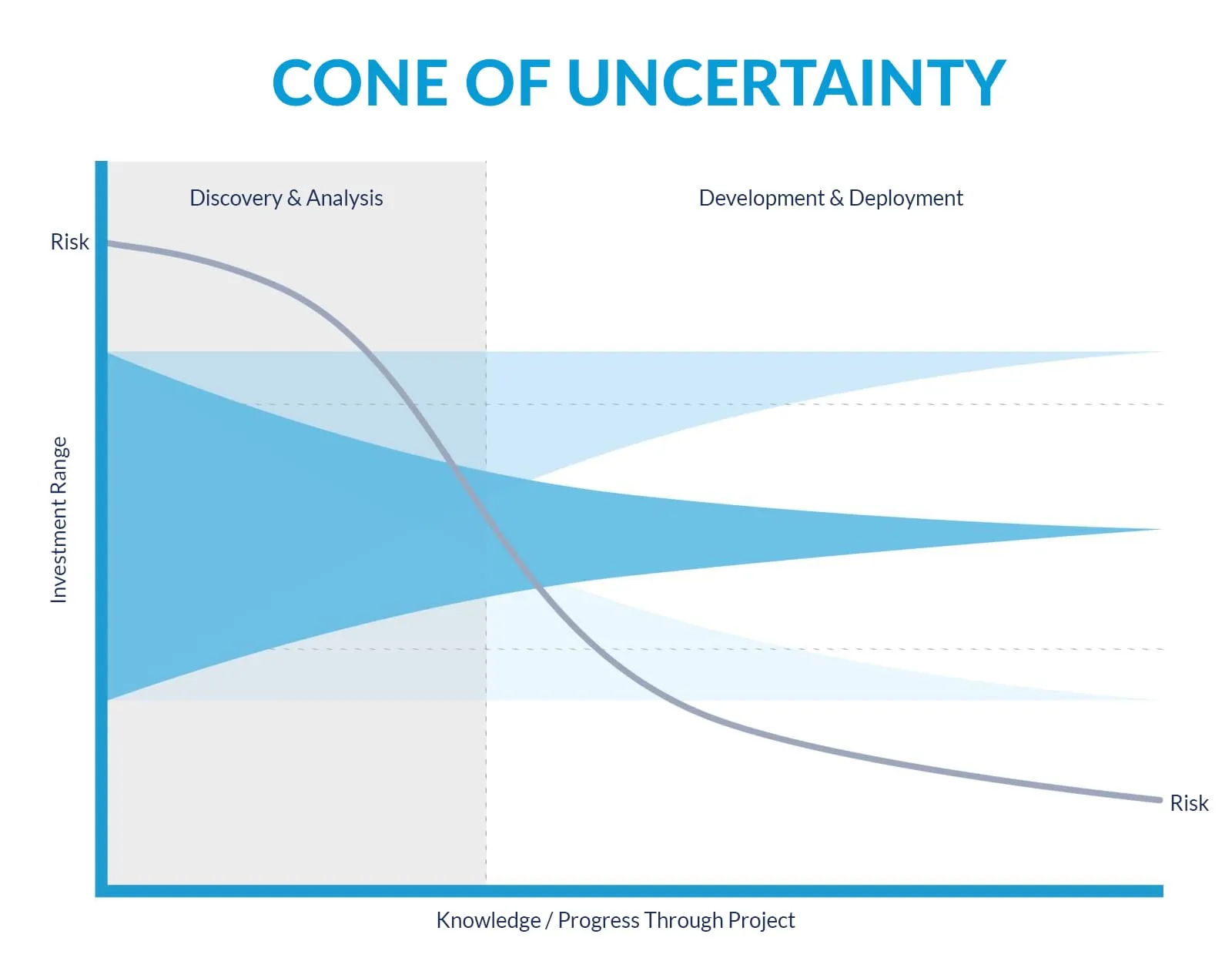

Steve McConnell’s Cone of Uncertainty is one of those concepts that everyone nods at and almost no one actually applies.

The basic shape is simple: early in a project, your estimates can be off by a factor of 4x in either direction (and this number is still just an estimate). That’s a 16x total range. As the project progresses and unknowns get resolved - requirements clarify, architecture solidifies, the hard problems surface and get solved - the cone narrows. By the time you’re close to done, your estimates are nearly exact. (You also, at that point, no longer need them.)

That last part is the irony: estimates become more reliable closer to completion, which is also when they’re least useful. Early on, when you’d most benefit from knowing the effort involved - when you can still change course or kill the project without wasted months - is precisely when you know the least. Most organizations make their firmest commitments at exactly that point.

Two more things are worth sitting with.

- First: the cone represents the best case for skilled estimators. It is not describing average teams with average practices. It’s describing what’s possible when you’re actually good at this. Most teams do worse. If your team commits to a date at the initial concept stage, you are not making a plan. You are making a wish - and then arranging your calendar around it.

- Second: the cone narrows only when you make decisions that eliminate variability. Time alone does not narrow it. Defining scope narrows it. Resolving architectural unknowns narrows it. Actually writing code and discovering what’s hard narrows it. Spending three weeks in meetings does not. Building something teaches you more about its complexity than any amount of pre-work analysis. You quickly hit diminishing returns trying to reason your way to certainty. The real problems only surface when you start.

The practical consequence is something I’ve started saying long ago explicitly to stakeholders: the quality of our estimate depends on how far we are in the cone. I won’t give you a two-week number in week one. I will give you a range, and I’ll tell you what has to be true for us to narrow it.

Most stakeholders, when you explain the cone to them, accept this. They’re not asking for false precision because they enjoy it. They’re asking because nobody ever told them they were allowed to ask for a range.

Why Fibonacci and Not Hours

When teams do estimate, the Fibonacci scale - 1, 2, 3, 5, 8, 13, 21 - often gets taught as a quirky Agile ritual without an explanation that actually satisfies anyone. Let me try to give you one.

The non-linearity is doing epistemic work.

When you estimate something as a 2 versus a 3, you’re saying: “I have a rough sense of the difference, but it’s marginal.” When you jump from 8 to 13, you’re encoding something much more important: the uncertainty band around this estimate is wider than the estimate itself. A 13-point story doesn’t mean “I know exactly how big this is, and it’s 13.” It means “I think this is in a category of work that is significantly more uncertain than an 8, and probably not as uncertain as a 21. Don’t ask me to be more precise than that.”

The gaps between Fibonacci numbers grow in a way that mirrors how complexity actually scales. Small things are roughly estimable. Large things become exponentially harder to predict because they contain more unknowns, more integration points, more opportunities for surprise. By the time a story is a 21 (or, some would say, 13), the honest conversation is: “This story should probably be split, because an estimate of 21 is essentially us saying we don’t understand the work yet.”

The other underappreciated function of Fibonacci estimation is what happens during the estimation conversation. When one person says 3 and another says 13, the point value is almost irrelevant. What matters is why two people on the same team, looking at the same story, saw it so differently. Maybe one saw a dependency the other missed. Maybe one knew about a technical constraint that wasn’t in the ticket.

That conversation - the one forced by disagreement - is often where the real work of refinement happens.

The biggest value in estimating isn’t the estimate. It’s checking whether there is a common understanding. The number is almost a side effect. Remove the forcing function, and that shared understanding often doesn’t form until someone is three days into a task and hits the hidden complexity.

This is why I find the #NoEstimates argument that “estimation meetings are waste” unpersuasive. Done right, those conversations are where alignment happens. You can absolutely run them badly - and most teams do. But the answer to a badly run meeting is not to cancel the meeting.

The Commitment Trap (And How to Avoid It)

The deepest dysfunction the #NoEstimates movement was responding to is this: estimates become commitments through social pressure, not logic. Nobody in a healthy organization should be able to take an estimate made under uncertainty and treat it as a promise. But it happens constantly, for understandable reasons: people need to plan, plans need dates, and dates need to come from somewhere.

There’s a related failure mode that’s just as damaging, and it’s about who creates the estimate. When people who aren’t doing the work invent a timeline and throw it over the fence to the teams, you get the worst of all worlds: an inaccurate estimate and a team with no ownership of the number. Now, when reality diverges - and it will - nobody knows whose fault it is, so everyone blames everyone. The people executing the work must always own the estimate. Imposing estimates on others is not optimism. It’s a blame-game waiting to happen.

When deadline obsession takes hold, something else happens too. Like a mirage in the desert, the looming date becomes the only thing anyone can see. Every conversation collapses into “how do we hit the deadline?” The question of whether you’re building the right thing - whether the work is actually good - slides quietly out of view. The deadline wasn’t wrong because the estimate was imprecise. It was wrong because the estimate became the mission.

The way I’ve navigated this is by separating three things (and working with my stakeholders to have agreement on this) that most organizations conflate:

- An estimate is a probabilistic forecast made under current levels of uncertainty. It should come with a range and an explicit statement of what assumptions it rests on. “We think this is 4-6 weeks, assuming requirements don’t change and we get answers on the API question by next Friday.”

- A plan is a commitment to a process, not an outcome. “We will work for two weeks, review progress, and give you an updated forecast.” Plans are things you can keep. Estimates are things you can only be more or less accurate about.

- A commitment is a promise with consequences. It should be made rarely, deliberately, and only when the cone has narrowed enough to warrant it. Committing at the initial concept stage is not bold. It’s reckless. When somebody forces you to commit to something, try only committing to priorities, not timelines. If that doesn’t work, make the level of confidence part of the commitment.

The dysfunction happens when organizations treat estimates as commitments from the moment they’re spoken. The political solution to that - which is what #NoEstimates often actually is, in practice - is to never speak a number at all. I understand the instinct. But the cost is that your organization loses its ability to reason about resource allocation, sequence dependencies, or have honest conversations with external stakeholders.

The better solution is to teach the cone. Teach the stakeholders. Make the distinction between estimate and commitment explicit every single time you give a number. It’s harder than refusing to estimate, and it takes longer to work. But it builds trust, instead of just avoiding the situations where trust could be broken.

What Good Estimation Practice Actually Looks Like

I won’t pretend I have a perfect system. I don’t. But across the teams I’ve managed, a few patterns have consistently made estimation more honest and more useful.

Estimate late, not early. The cone narrows only through learning. Do spikes. Write some exploratory code. Talk to the teams whose systems you’ll integrate with. Work with what you do know to discover what you don’t know. Resist the pressure to give numbers before you’ve done the learning that would make them meaningful.

Don’t over-decompose before starting. This one is counterintuitive, and I’ve watched teams fall into it repeatedly. The instinct when facing uncertainty is to break the work down into ever-smaller pieces, hoping that enough decomposition will produce a reliable estimate. It won’t - and the time spent in those sessions is time you’re not spending learning from actual work. There’s a real cost here that’s easy to miss: elaborate pre-work plans also calcify. Teams cling to them past the point where they reflect reality, which makes the eventual divergence more disorienting, not less. Start with simpler plans that are easier to adjust.

Give ranges, not points. “Three to five weeks” is more honest than “four weeks” and almost as actionable. If someone says they can only work with a single number, give them the midpoint of your range - but tell them it’s a midpoint. Never let a range collapse into a point estimate in their mind without your knowledge. Agree on using the cone of uncertainty with your stakeholders and refer to it whenever you’re communicating estimates.

Make your assumptions visible. An estimate is only as good as what it assumes. If the estimate assumes no scope changes, say so. If it assumes a particular technical approach, say so. If it assumes someone else’s team delivers something by a certain date, say so. Assumptions that live in your head become misunderstandings that surface at the worst moment.

Track accuracy over time - without punishment. Not to blame people. To calibrate. Teams that have been estimating together for six months and reviewing their accuracy develop a shared sense of where they systematically over- or underestimate. That calibration is genuinely useful, and it compounds over time. But punishing inaccurate estimates achieves the opposite: it teaches teams to pad estimates defensively, and padded estimates are even less useful than honest uncertain ones. Wrong estimates in a complex domain are not a character failing. They’re a property of the domain.

Split anything over 8. A story that’s a 13 or a 21 on the Fibonacci scale is almost always a story that contains hidden complexity you haven’t surfaced yet. The act of splitting it forces you to articulate what you actually know. Often, you discover that two of the three sub-stories are actually small, and one is where all the uncertainty lives. Now you have useful information.

The Uncomfortable Truth for Both Camps

Here’s what I think is actually true, and I’ve found it unpopular in equal measure with both estimation enthusiasts and #NoEstimates advocates.

Estimates are a form of communication, not calculation. Their purpose is to enable coordination and decision-making under uncertainty - not to predict the future. The failure modes of estimation are not random. They are systematic: estimating too early, treating ranges as points, treating estimates as commitments, ignoring what the Cone of Uncertainty tells you about epistemic position, imposing estimates on the people who have to execute them.

There’s a trap worth naming here - what Dalmijn calls the Complex Work Estimation Fallacy: the belief that by estimating more often, refining the process, and working together longer, a team will eventually produce accurate estimates. It won’t. The ceiling on estimation accuracy in a Complex domain is not a function of team maturity. It’s a function of the domain itself. You can get better. You will not get accurate. Confusing those two outcomes is how organizations end up punishing teams for things that are fundamentally outside their control.

Refusing to estimate doesn’t fix any of this. It just opts out of the coordination game. For a team that truly operates independently, ships constantly, and has no external commitments - that might work. Those teams exist. I’ve been on one. It was a specific organizational context that most of us will not be in for most of our careers.

For everyone else, the choice is not between “bad estimates” and “no estimates.” The choice is between unconscious, low-quality estimates (which your organization will make anyway, with or without you) and explicit, humble, range-based, assumption-visible estimates that give the people around you something real to work with.

Joseph Pelrine was right that task estimation lives in the Complex domain. That’s exactly why we need to approach it with a complexity mindset: probe, observe, respond. Estimate, track, calibrate. Do it again. The cone doesn’t narrow by waiting. It narrows by learning.

Stop trying to get out of estimating. Start getting better at it - and clear-eyed about how good “better” can actually get.